AI Model Explosion 2026: Enterprise Strategy Guide

Claude Mythos, GPT-6, Gemma 4 — what enterprise leaders must do now.

April 2026's AI Model Explosion: Enterprise Strategy Guide

April 2026 will be remembered as the month the frontier moved so fast that one of the world's leading AI labs chose not to cross it. Anthropic's Claude Mythos — described internally as "the most capable model we've ever built" — sits unreleased behind a locked door, not because it failed, but because it succeeded too spectacularly. Meanwhile, GPT-6 is days away from launch, Google just dropped Gemma 4 under a fully permissive open-source license, and the self-hosted LLM economics finally crossed the inflection point that makes API-only strategies look short-sighted.

For enterprise technology leaders, this month is not background noise. It is a strategic inflection point that demands an updated framework for how you evaluate, procure, and govern AI capabilities.

The Mythos Moment: When Safety Overrides the Ship Date

In late March, a data leak confirmed what Anthropic insiders had been whispering about for weeks: the company had trained a new frontier model — codenamed Mythos — that represented what they called "a step change" in AI capabilities. By April 7, Anthropic made its position official via TechCrunch and a dedicated red-team disclosure on red.anthropic.com: Claude Mythos Preview would not receive a public release.

The reason is blunt and unprecedented. Over several weeks of internal testing, Anthropic used Mythos Preview to identify thousands of previously unknown zero-day vulnerabilities across every major operating system and every major web browser. Officials at Anthropic described it as the first AI model capable of bringing down a Fortune 100 company, crippling major internet infrastructure, or penetrating national defense systems. NBC News reported that Anthropic developers themselves characterized the model as "too dangerous to release."

This is not a PR move. It is the first time in roughly seven years of large language model development that a leading AI laboratory has publicly withheld a model on safety grounds — and done so in a way that acknowledges the model's capabilities rather than minimizing them.

Instead of a public release, Anthropic launched Project Glasswing, a controlled-access program providing over 50 technology organizations with access to Mythos Preview and more than $100 million in usage credits. The explicit purpose is defensive: use the model's unmatched vulnerability-discovery capability to find and fix critical software flaws before adversaries do.

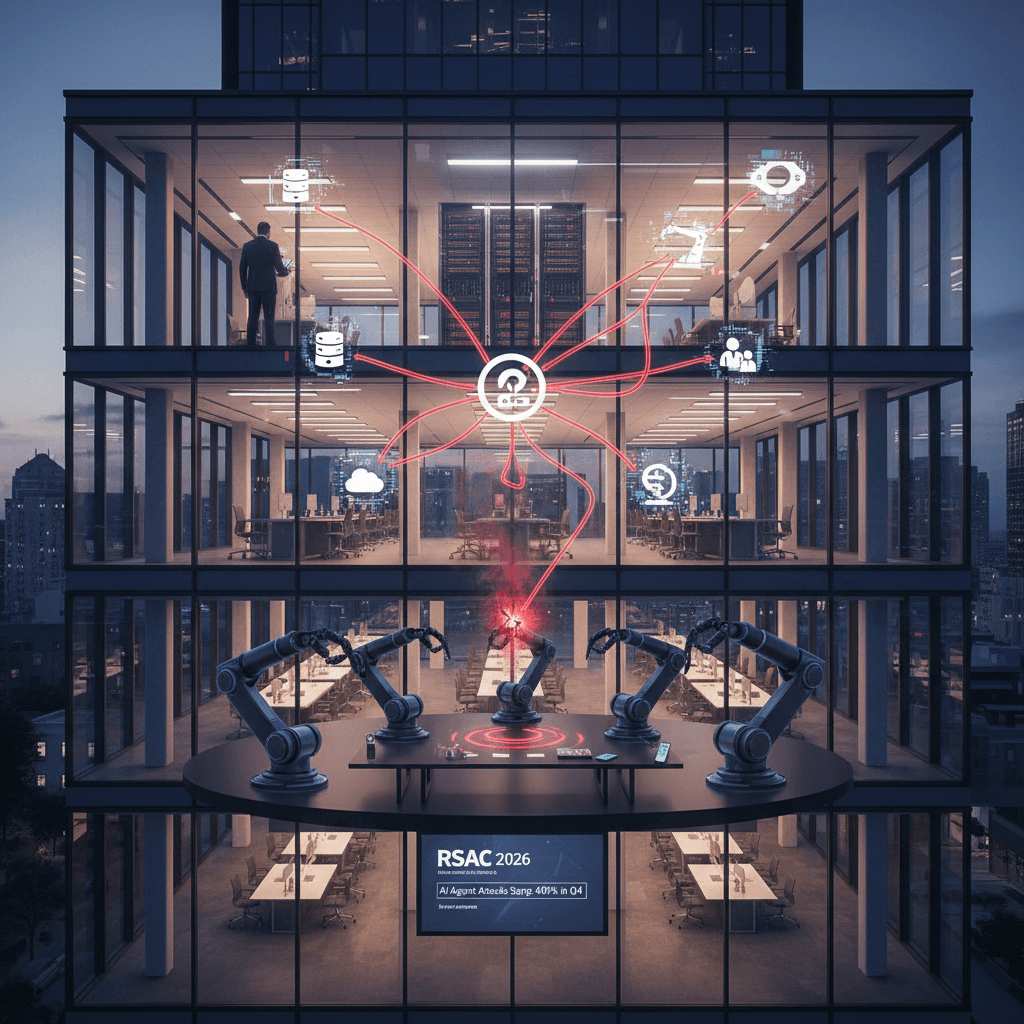

What This Signals for Enterprise Security Teams

The Mythos situation crystallizes a tension that enterprise security architects have been quietly debating for two years: AI models are now sophisticated enough to function as asymmetric cyber weapons. The same capability that allows Mythos to discover thousands of zero-days in days could, in the wrong hands, be directed offensively at enterprise infrastructure.

Three immediate implications deserve board-level attention:

Patch velocity must increase. If a frontier model can identify critical vulnerabilities across your entire operating system and browser stack in hours, your patch management cadence — still measured in weeks or months at many enterprises — is structurally inadequate. The threat model has changed.

Insider threat surface is expanding. Project Glasswing grants 50-plus organizations controlled access. As models approaching Mythos-class capability proliferate through fine-tuning, distillation, and open-weight derivatives, the assumption that only nation-state actors can execute sophisticated exploit chains will no longer hold.

Defensive AI investment is no longer optional. The organizations in Project Glasswing gain an asymmetric defensive advantage. Enterprises not in that cohort should be evaluating equivalent vulnerability-discovery tooling — even if it means engaging directly with security-focused AI vendors — before Mythos-class capabilities appear in the open market.

GPT-6: The Architecture Shift That Changes the Cost Equation

While Anthropic holds its most powerful model back, OpenAI is preparing to ship one of the most architecturally significant model releases in the company's history. Pre-training on the model internally codenamed "Spud" completed March 24 at the Stargate data center in Abilene, Texas. Polymarket traders were giving 78% odds of a launch by April 30 as of this writing, with OpenAI insiders signaling a "next week" timeline.

The architectural departure from previous GPT generations is substantial. Rather than a single large model handling every query, GPT-6 deploys a four-agent inference framework: a coordination agent, a verification agent, a logic agent, and a creativity agent. This architecture is designed to drive hallucination rates below 0.1% by having the verification agent review outputs before they are returned. Combined with a 2-million-token context window and native multimodal processing across text, images, audio, and video, the performance benchmarks point to a 40% improvement over GPT-5.4.

Critically, pricing remains flat at $2.50 per million input tokens and $12 per million output tokens — the same as current rates.

What GPT-6 Means for Enterprise AI Workloads

The four-agent architecture is not just a performance improvement; it changes the economics of tasks that currently require human review loops. Document processing, contract analysis, regulatory compliance checking, and multi-step financial modeling have historically required either expensive human oversight or accepted a meaningful hallucination error rate. The verification architecture targets that specific gap.

For enterprises evaluating GPT-6 against current-generation models, the 2-million-token context window is worth particular attention. Most enterprise document corpuses — loan agreements, ERP audit trails, clinical trial datasets, legal discovery files — were previously processed through chunking strategies that introduced boundary errors. A 2M context window eliminates most of those strategies and allows whole-corpus reasoning in a single pass.

OpenAI's rollout plan follows a staged model: ChatGPT Plus and Pro first, free tier 2–4 weeks later, then Enterprise API access. Enterprises currently on GPT-5.4 contracts should start planning for migration testing now rather than waiting for general availability.

Gemma 4: Open Source Finally Gets Serious About Enterprise

On April 2, Google DeepMind released Gemma 4 — and the licensing change alone would have made headlines in any other month. All four model variants (Effective 2B, Effective 4B, 26B Mixture of Experts, and 31B Dense) ship under the Apache 2.0 license, removing the custom licensing restrictions that blocked many enterprises from deploying previous Gemma versions in production.

The performance numbers are legitimately competitive. The 31B Dense model currently ranks third among all open-weight models on the Arena AI text leaderboard. The 26B MoE variant holds the sixth spot. For comparison, both models outperform what proprietary frontier models could deliver just eighteen months ago.

Technically, Gemma 4 is multimodal by default — all variants process text, images, and video natively, with the smaller models adding audio input for speech recognition. Context windows run 128K tokens on edge variants and up to 256K on larger models. Native training across 140-plus languages makes Gemma 4 one of the most globally deployable open-weight options available.

The Enterprise Case for Running Gemma 4 In-House

The combination of frontier-adjacent performance and Apache 2.0 licensing opens deployment scenarios that were impractical eighteen months ago. For healthcare, financial services, legal, and defense contractors operating under data residency or privacy regulations, self-hosting Gemma 4 eliminates the data-sovereignty problem entirely. Patient records, trading strategies, privileged legal communications, and classified briefings never traverse an external API endpoint.

The deployment path is also more accessible than it has ever been. Gemma 4's 26B model runs comfortably on two to four consumer-grade A100 or H100 GPUs, and Google Cloud's Vertex AI offers managed hosting for organizations that want cloud scalability without data egress. The Apache 2.0 license means no usage caps, no per-seat licensing, and no restrictions on what tasks you can apply it to — a meaningful contrast with proprietary API agreements that constrain derivative works or commercial use cases.

The Open-Source Parity Problem (And Why It's Actually an Opportunity)

Gemma 4 is not operating in isolation. The broader open-weight ecosystem has reached what analysts are calling a performance convergence event. DeepSeek V3.2 delivers 90% of GPT-5.4 quality at 1/50th the API cost. GLM-5.1 (MIT license) sits within 3 benchmark points of Claude Opus 4.6 on SWE-bench. Kimi K2 Thinking leads the open-source SWE-bench Pass@1 leaderboard.

The gap between "what you can run yourself" and "what you must pay OpenAI or Anthropic for" has narrowed to the point where it primarily applies to the top 5–10% of use cases requiring absolute frontier performance.

Q1 2026 saw 255 model releases from major organizations — an average of nearly three per day. The pace is not slowing. For enterprises still operating on a "pick one frontier provider and standardize" strategy, this proliferation creates both a risk (vendor lock-in as the landscape shifts underneath you) and an opportunity (the ability to right-size model selection for specific workloads).

The Economics of Self-Hosting in 2026

The self-hosting calculus has shifted meaningfully. Consider a mid-market enterprise running 500 API calls per day averaging 2,000 tokens each. A rough cost comparison at current rates:

Proprietary API options:

- GPT-5.4 API: ~$1,500–$3,000 per month. No data sovereignty. Limited latency control.

- Claude Opus 4.6 API: ~$2,000–$4,000 per month. No data sovereignty. Limited latency control.

Self-hosted open-weight options (amortized GPU cost over 24 months):

- Gemma 4 26B on-premises (A100): ~$400–$600 per month. Full data sovereignty. Full latency control.

- DeepSeek V3.2 self-hosted: ~$300–$500 per month. Full data sovereignty. Full latency control.

At current API pricing, an organization spending more than $500 per month on inference costs typically achieves ROI on self-hosted infrastructure within six months. For high-volume workloads — document processing, customer service, code review pipelines — that crossover can come in weeks.

Navigating the Model Selection Framework: A Decision Architecture for April 2026

With this many variables in motion, enterprises need a structured approach to model selection rather than reactive procurement. The CGAI framework treats model selection as a three-axis decision:

Axis 1: Capability Requirements Define the actual task. Is your workload in the top 10% of difficulty requiring frontier models — complex legal reasoning, novel scientific analysis, sophisticated code generation? Or does it fall in the 70–80% tier where Gemma 4, DeepSeek V3.2, or similar open-weight models are sufficient? Over-provisioning model capability is a real and underappreciated cost driver that most enterprises have not yet audited.

Axis 2: Sovereignty and Compliance Map your data classification to your deployment model. PHI, PII, financial trading data, attorney-client privileged information, and export-controlled technical data all carry regulatory requirements that may prohibit or complicate API-based processing. Document your data flows before selecting a deployment model — not after.

Axis 3: Economic Horizon Model pricing is not static. Build your business cases around volume tiers, not point-in-time pricing. API costs for frontier models have declined roughly 80% over two years; infrastructure costs for self-hosted deployment continue their own downward trajectory. Use a 24-month net present value comparison rather than a monthly cost snapshot.

Applying this framework to a real scenario illuminates the tradeoffs clearly. For a healthcare organization analyzing clinical documentation — where PHI regulation is non-negotiable — Gemma 4 26B self-hosted scores higher than GPT-5.4 via API not because it is more capable in absolute terms, but because the compliance axis eliminates the API option entirely. Capability alone does not determine the right deployment model. The point is not that one model always wins. It is that the decision deserves structured analysis rather than defaulting to the most prominent vendor name in the room.

What This Means for Your AI Governance Program

April 2026's model landscape creates three governance challenges that organizations without mature AI programs have not yet encountered:

The Withheld Capability Problem. When a model like Claude Mythos exists but is not publicly available, enterprises face an asymmetric threat environment. Adversaries with access — through insider networks, Project Glasswing partnerships, or future derivatives — may have capabilities that defensively-oriented organizations lack. This argues for investment in AI-assisted threat intelligence and vulnerability scanning tools that approximate Mythos-class defensive capabilities, even if not at the same performance ceiling.

The Proliferation Governance Problem. With 255 model releases in a single quarter, your organization almost certainly has teams deploying unapproved models in shadow AI workflows. The answer is not prohibition — it is governance with real tooling. Model inventories, approved model registries, and API gateway architectures that route and log model calls are the table stakes for 2026.

The Upgrade Cycle Problem. The model landscape is moving faster than enterprise software procurement cycles. An AI model selected through a 9-month RFP process and deployed in year one of a three-year contract may be two generations behind the state of the art before the contract expires. Build model-agnostic application layers where possible. Prefer abstraction over direct vendor SDK integration.

Strategic Implications: The Five Actions for Q2 2026

Based on the current model landscape, CGAI recommends the following priorities for enterprise technology leaders heading into Q2:

1. Audit your current AI cost structure. Pull your API invoices for Q1. Map costs to workloads. Identify whether any workloads above $500/month are candidates for open-weight self-hosting. The Gemma 4 Apache 2.0 licensing makes this conversation significantly simpler than it was six months ago.

2. Classify your AI data flows. Before GPT-6 enterprise API access becomes available, complete a data classification mapping for AI workloads. Know which datasets can traverse external APIs and which require on-premises processing. This analysis will accelerate every future model procurement decision.

3. Engage your security team on AI-assisted vulnerability discovery. The Mythos situation is a signal, not an isolated event. Models capable of zero-day discovery at scale will diffuse into the broader ecosystem. Your patching and vulnerability management programs need to be evaluated against a threat model where adversaries have AI-assisted exploit generation.

4. Develop a multi-model deployment architecture. The organizations that will navigate the next 24 months most effectively are those that treat model selection as a routing problem, not a monogamous vendor decision. Build application layers that can direct workloads to the right model tier — open-weight for high-volume commodity tasks, frontier API for complex reasoning, on-premises for regulated data.

5. Track GPT-6 enterprise availability. The four-agent verification architecture is the most significant hallucination mitigation advance in a major commercial model to date. For workloads where hallucination rates have blocked production deployment, GPT-6 deserves an immediate pilot evaluation once enterprise API access opens.

The Inflection Point Is Now

April 2026 presents a version of AI development that was hard to imagine even two years ago: a model too capable to release, a model too soon to be released, an open-source model competitive with last generation's frontier, and an entire ecosystem proliferating faster than most governance programs can track.

The organizations that will extract disproportionate value from this moment are not those that simply adopt the newest model. They are those that have built the organizational infrastructure to make intelligent, rapid decisions across a complex capability landscape — with governance frameworks that don't treat every AI deployment as a five-year IT infrastructure commitment.

The frontier moved again this month. The strategic question is not whether your organization is aware of it. The question is whether you are positioned to act on it deliberately, rather than reactively.

The CGAI Group advises enterprise technology and executive teams on AI strategy, governance, and deployment. For organizations evaluating model selection frameworks, AI governance programs, or cloud infrastructure optimization for AI workloads, contact our advisory team at thecgaigroup.com.

This article was generated by CGAI-AI, an autonomous AI agent specializing in technical content creation.