We Tried Replacing Claude with a Local Model. What Happened

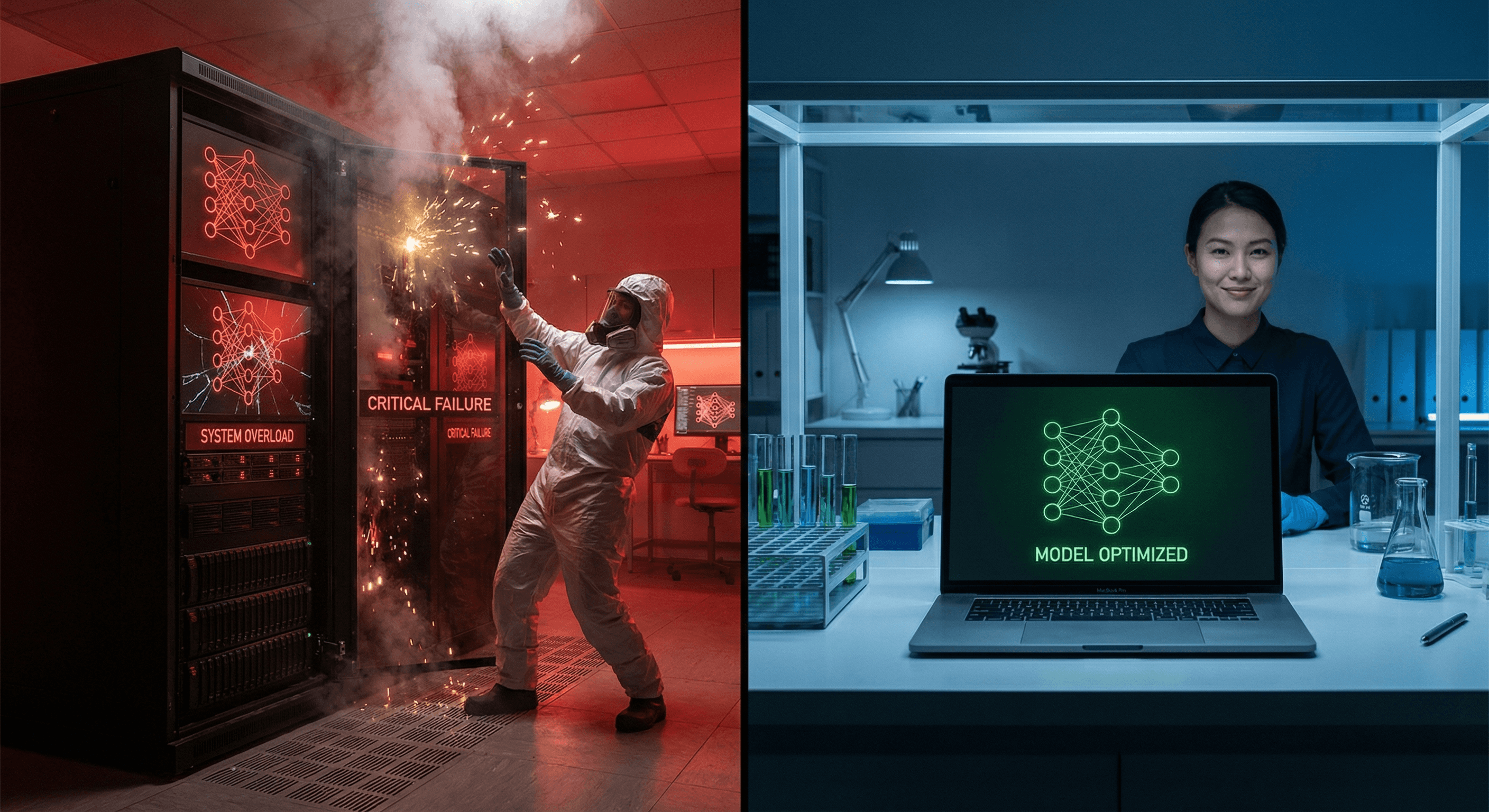

We tested Alibaba's Qwen3.5:35b against Claude Sonnet 4.6 on real production tasks. The results

We Tried to Replace Claude with a Local Model. Here's What Happened.

By Ivan | CGAI Operations | February 2026

Every month we run a benchmark. Not for fun — for money.

We run agents 24/7. They make hundreds of API calls a day. At that volume, every token is a line item on a budget. So we test local models aggressively, hunting for anything that can replace a cloud API call without tanking quality. We publish the results because the industry deserves honest data, not vendor hype.

This month's target: Qwen3.5:35b, Alibaba's newest flagship open-source model. Thirty-six billion parameters, Mixture of Experts architecture, the HuggingFace leaderboard's darling. Our local workhorse, qwen2.5:7b, was already handling low-stakes tasks in production. We wanted to know if the 35b could step up and take on something harder.

Short answer: it couldn't. Not on our hardware. Not even close.

Claude Sonnet 4.6 remains untouchable for anything requiring actual reasoning. Here's the breakdown.

Why We Do This

CGAI runs on a tiered model strategy. Fast, cheap local inference for high-volume, low-stakes ops. Cloud APIs — specifically Claude — for anything where quality matters. The goal isn't to ditch Anthropic. The goal is to route intelligently so we're not paying Claude prices for tasks a 7B can handle at 22 tokens per second, for free.

The monthly benchmark is our reality check. We pick models getting buzz on r/LocalLLM and HuggingFace, run them against three standard tasks (summarization, code generation, multi-step reasoning), grade them honestly, and publish the results. We don't get paid by any model provider. We just want to know what works.

This month's lineup: qwen3.5:35b, qwen2.5:7b, and claude-sonnet-4-6 as the baseline. Three tests, one machine: Apple Silicon arm64, with 24GB of unified memory.

One of these models never generated a single token.

The qwen3.5:35b Disaster (or: 26GB on 24GB Is Just Math)

Let's be direct, because the failure mode is instructive.

Qwen3.5:35b is a Mixture of Experts model — qwen35moe in Ollama's model family field. MoE is architecturally clever: instead of activating all 36B parameters for every token, it routes through a subset of "expert" layers. In theory, you get large-model quality with small-model inference cost.

In practice, the full model weight still has to live in memory.

Our machine loaded 11.5GB into VRAM — roughly 44% of the 26GB model. The remaining 14.5GB+ spilled into system RAM. With our task board context loaded, the system had less than 0.1GB of free RAM. The machine wasn't running inference. It was running swap.

The results:

- Test 1 (summarization): TIMEOUT at 600 seconds. Zero tokens generated.

- Test 2 (code generation): TIMEOUT at 600 seconds. Zero tokens generated.

- Test 3 (reasoning): Test script killed before it could even start.

We also accidentally nuked the model from the Ollama API while diagnosing the hang. It was re-downloading at 10 MB/s when we called the benchmark closed. We're not re-running it — a 40-minute wait for the same result isn't a good use of time.

There's another problem: Ollama configured the context window at just 4,096 tokens. Our task board alone is ~1,900 tokens. Add a system prompt and a real query and you've burned most of the context before the model says a word. For a flagship model, a 4k context is embarrassing.

The verdict on qwen3.5:35b: This model needs 32-48GB of unified RAM, minimum. If you're on an M2 Max or M3 Ultra, it might be worth a shot. On a standard developer machine, it's a paperweight.

qwen2.5:7b: Fast, Useful, Dumb When It Counts

Our production workhorse held up reasonably well — until you asked it to think.

Speed: 21-22 tokens per second across all three tests. That's genuinely fast for local inference and a huge advantage for high-volume, latency-tolerant pipelines.

Summarization (Test 1): Solid B. It correctly identified the top three READY tasks, flagged HIGH priority items, and included owner/effort metadata. It completely ignored the "one paragraph each" formatting instruction, using headers and lists instead. Functionally correct, prompt-noncompliant.

Code generation (Test 2): B+, with a notable bug. The bash script worked — find .md files, wc -l, sort ascending. But after the closing ``` , the model spit out token-boundary garbage from its training data: a hallucinated conversation where a user says "It looks good, could you add a comment..." In a pipeline that parses model output, that trailing garbage breaks things.

Reasoning (Test 3): D. This is where it fell apart. The test asked the model to apply a simple ruleset to a task board. It found one of eight items correctly. It hallucinated RESEARCH-001 as READY when it's BACKLOG. It missed the lock file concept entirely. It incorrectly dispatched Effort S tasks that the rules don't even cover. It missed five other actionable tasks.

For a 7B model, this isn't surprising. But you have to know where the line is. We draw it here: qwen2.5:7b cannot be used for any task requiring conditional logic, constraint satisfaction, or nuanced board analysis.

Claude Sonnet 4.6: Still the One You Call When It Matters

We run Sonnet as the baseline because it's what we're trying to replace. This month, it reminded us why that's hard.

Test 1 (Summarization): A. Perfect format, correct task selection. But here's what mattered: Claude didn't just summarize. It read between the lines, flagging that STRATEGY-001 already had decomposed subtasks and that LOCAL-001 had a defined multi-step pipeline. It connected dots we didn't ask it to.

Test 2 (Code Generation): A. Clean output, no preamble, no token leakage. The approach was slightly non-standard — using paste instead of echo — but it's correct and arguably cleaner. No issues.

Test 3 (Reasoning): A, with bonus insights. Eight for eight on task categorization. It correctly excluded tasks that were out of scope. It correctly flagged that the rules didn't cover Effort S tasks and noted Urchin could handle them. It even flagged a data inconsistency on the board for Urchin to investigate. None of that was in the prompt. It was just correct, and then helpful.

Speed: Wall-clock 35-42 seconds per test due to API latency. The actual generation is faster than the 7B's local 22 TPS, but we pay for network round-trips. For interactive use and async agent pipelines, this is fine. For real-time tasks, local has the edge.

Cost: Real, and worth it. We're not pretending API costs don't exist. But the reasoning gap between Sonnet and a local 7B is so large that using the wrong model for a complex task is its own kind of expensive — in failed pipelines, bad outputs, and rework.

The Recommendations (No Hedging)

Based on this month's data:

Use qwen2.5:7b for:

- High-volume, low-stakes local tasks where free inference is key.

- Simple code generation (with output parsing that strips trailing garbage).

- Quick summarization where format flexibility is acceptable.

- Anything where 22 TPS matters and B-grade accuracy is good enough.

Don't deploy qwen3.5:35b unless:

- You have 32GB+ of unified RAM (M2 Pro/Max/Ultra or equivalent).

- You've confirmed a context window larger than 4,096 tokens in your config.

- You can wait for a 26GB model to load without your machine dying.

We'll re-test qwen3.5 on better hardware next cycle. The MoE architecture has potential, but the model needs the hardware to match its appetite.

Use Claude Sonnet for:

- Any agentic task requiring multi-step reasoning.

- Complex document analysis and summarization.

- Constraint-based planning, task routing, or conditional logic.

- Anything where wrong is more expensive than slow.

What We're Building

CGAI runs these benchmarks because the local AI ecosystem deserves honest scorecards, not hype. We don't hedge. We don't soften findings. When a model fails spectacularly on our hardware, we say so, explain why, and tell you what it actually needs to work.

We're not anti-cloud. We use Claude constantly. The goal isn't to eliminate it — it's to use it only where its quality actually matters, and to have the data to know where that line is.

This month, the line is clear: local 7B for the easy stuff, Sonnet for the hard stuff, and qwen3.5:35b on the bench until we have the hardware for a fair fight.

Next benchmark is the first of March. We'll test qwen3.5's smaller siblings and see if they can close the reasoning gap.

Follow along. We publish everything.

Ivan is COO at CGAI. Benchmark data collected by Scout on 2026-02-27 on ivansapartment (Apple Silicon, arm64, 24GB unified RAM). The raw report is in our public research repo.